Why CDN Like Computational Storage

When most people think of content delivery networks (CDNs), they think about streaming huge amounts of content to millions of users, with companies like Akamai, Netflix, and Amazon Prime coming to mind. What most people don’t think about in the context of CDNs is computational storage – why would these guys need a technology as “exotic” as in-situ processing? Sure, they have a lot of content – Netflix has nearly 7K titles in its library, while Amazon Prime has almost 20K titles; but at 5GB per title, that is only 35TB for Netflix, and 100TB for Amazon Prime. These aren’t the petabyte sizes that one typically thinks of when discussing computational storage.

So why would computational storage be important to CDNs? Two phrases summarize it all – encryption/Digital Rights Management (DRM), and locality of service. For CDNs that serve up paid content, the user’s ability to access the content must be verified (this is the DRM part), and then the content must be encrypted with a key that is unique to that user’s equipment (computer, tablet, smartphone, set-top box, etc.). When combined with the need to position points of presence (PoPs) in multiple global location, the cost of this infrastructure (if based on standard servers) can be significant.

Computational storage helps to significantly reduce these costs in a couple of ways. Our ability to search subscriber databases while on the SSD eliminates the need for expensive database servers, significantly reducing the PoP footprint. Our ability to encrypt content on our computational storage devices also eliminates the servers that typically perform this task. When you consider that six of our 16TB U.2 SSDs could hold the entire Netflix library (with three SSDs for redundancy), you can see how this technology could be important to CDNs. Want more information on how computational storage can help the content delivery network industry, just contact us at nvmestorage.com.

Amsterdam is blocking new datacenters.

Too much energy consumption and space allocation! What can we do?

Datacenter capacity in and around Amsterdam has grown with 20% in 2018 according Dutch Data Center Association (DDA). Bypassing rival data center locations such as London, Paris and Frankfurt. But that growth comes with a problem. They claim too much space and consume too much energy. Therefor the Amsterdam Counsel have decided to invoke a temporary building stop for new data centers until new policy is in place to regulate growth.

At current, local governments do not have control on new initiatives and energy suppliers have a legal obligation to provide power. The problem here is that the growth of datacenter capacities is contra productive to the climate ambitions of governments which are adopted in legislation. Therefor regulation enforcing sustainable growth with green energy and delivery of residual heat back to consumer households will be mandated and in line with climate ambitions.

For now, the building stop is for 1 year. The question is what can we do in between, or are we going to sit and wait and do nothing?

If you cannot grow in performance and capacity outside the current floor space, the most sensible thing to do is to reclaim space within the existing datacenters. There are a few practical changes possible that have minor impact on operations but a huge impact on density and efficient usage of currently available floor space.

- Usage of lower power, larger capacity NVMeSSD’s instead of spinning disk’s and high performance energy slurping 1st generation NVMe SSD. Already available are 32TB standard NVMe SSD at less than 12w power. Equipping a 24 slot 2 u server delivers 768TB of storage capacity in just 2U Rackspace at less than ½ watt/TB. NGD Systems is the front runner of delivering these largest capacities NVMe SSD’s at the lowest power consumption rates. No changes required, just install and benefit from low power and large capacities.

- Reduce the number of servers, Cpu, RAM and reduce movement of data by processing secondary compute tasks, like inference, encryption, authentication, compression on the NVMe SSD itself. This is called Computational Storage by NGD Systems. Simply explained. Install an ARM quad core CPU on every NVME SSD and standard Linux applications can run directly off the drive. A 24 slot 2U server can host 96 additional Linux cores that augment the existing server, creating an enormously efficient compute platform at very low power, replacing many unbalanced X86 servers. Change required. Look at the application landscape and determine what applications are using too much resources and migrate them off, one by one.

- Disaggregate storage from cpu. There is huge inefficiency in server farms. Lots of Idle time of CPU’s and unbalanced storage to cpu ratio’s. Eliminating this unbalance is relatively simple and increases storage/cpu utilization and efficiency. Application servers mount their exact required storage volumes from a networked storage server over the already existing network infrastructure at the same low latencies as if the NVMe SSD was inside the server chassis. The people at Lightbits Labs have made it their mission to tackle the problem of storage inefficiencies in the datacenter. Run POC’s to determine where the improvements are.

- The most simple method to reclaim space is to throw away what you are not usinganymore or move it outside to where the rent is cheaper and space widely available. If you know what data you have and what the value of that data is actions to save, move or delete that data can be put into policies and automated. Komprise has the perfect toolset to analyze, qualify and move data to where it sits best, including to the waste bin. Run a simple pilot and check the cost savings.

What happens in Amsterdam area today is something we will start to see happening more and more and will kick off many more initiatives in other areas to regulate data center growth and bring that in line with our climate ambitions. If we start banning polluting diesel cars from our inner cities and tax them why could we not have the same discussion on the usage of power slurping old metal boxes with SATA spinning rust within data centers? I am pretty sure that regulators will encourage good behavior with permits and discourage bad behavior with taxes in the not too distant future.

Maybe it is time to start thinking about Watts/TB in stead of $/Gb

NGD Systems Becomes First to Demonstrate Azure IoT Edge using Computational Storage

The world leader in NVMe Computational Storage, announced today at the Flash Memory Summit that it has embedded the Azure IoT Edge service directly within its Computational Storage solid-state devices (SSDs), making them the only platform to support the service directly within a storage device.

Lower Network Threat Detection cost with Computational Storage

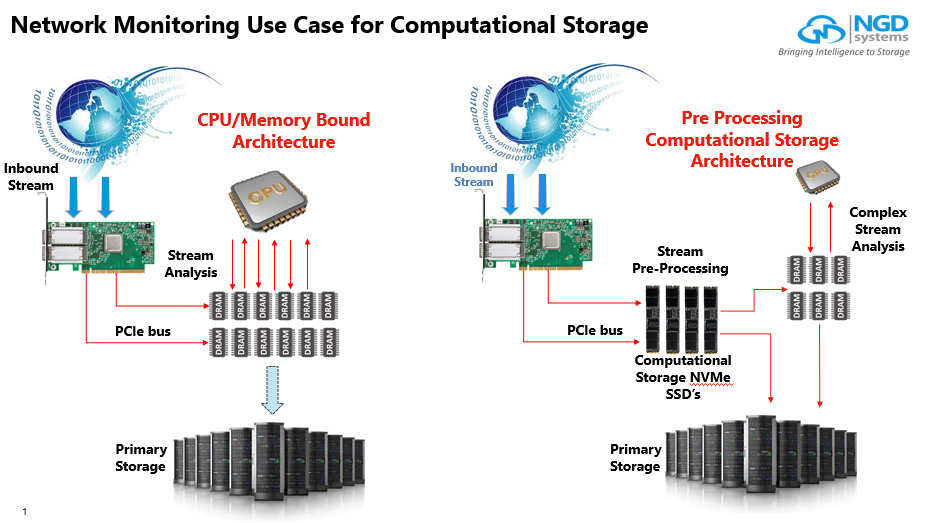

Network Threat Detection Systems require huge amounts of DRAM and CPU to analyse streams. By having Computational NVMe SSD’s pre-processing the streams, the clean streams can bypass main CPU and DRAM and directly moved to primary storage as where only the dirty streams are moved to DRAM and CPU for deeper inspections. As shown on the left hand side the classical CPU/DRAM bound architecture and on the right side the picture that illustrates Computational NVMe SSD’s taking over pre processing compute tasks.

The clear benefits are:

- Infinitely scalable analysis buffer using lower cost NVMe SSD’s (as opposed to more expensive DRAM)

- In-Situ processing allows for incoming stream to be pre-processed and either sent directly to primary storage (if clean) or sent into main memory for further analysis (dirty)

- Architecture allows for system cost reductions by reducing the amount of DRAM needed for analysis buffer and reducing x86 CPU cycles required for analysis which can reduce core count (most of these boxes use tens of cores so a lot of money on the table here)

Computational Storage is a concept that has the power to deliver huge business benefit of faster results at lower cost per result. By having a fully functional Quad core 64 bit ARM processor on each of the NVMe SSD’s in the server, the SSD’s are not only used to store large amounts of data but can process and analyze data right at the location where the data was stored in the first place. Main CPU, GPU and DRAM are only being used for very high demanding compute tasks as where the secondary functions like search, indexing, pre analytics, encryption etc are processed inside the NVMe SSD. All the SDD’s work together as compute nodes in a distributed compute cluster inside the server chassis.

With Computational Storage overall compute performance and storage capacity per node increases significantly while it requires less equipment, less IO, less power and less floor space.

The result: faster results at lower processing cost.